Explainable AI : The role of the reservoir connectivity in Echo State networks

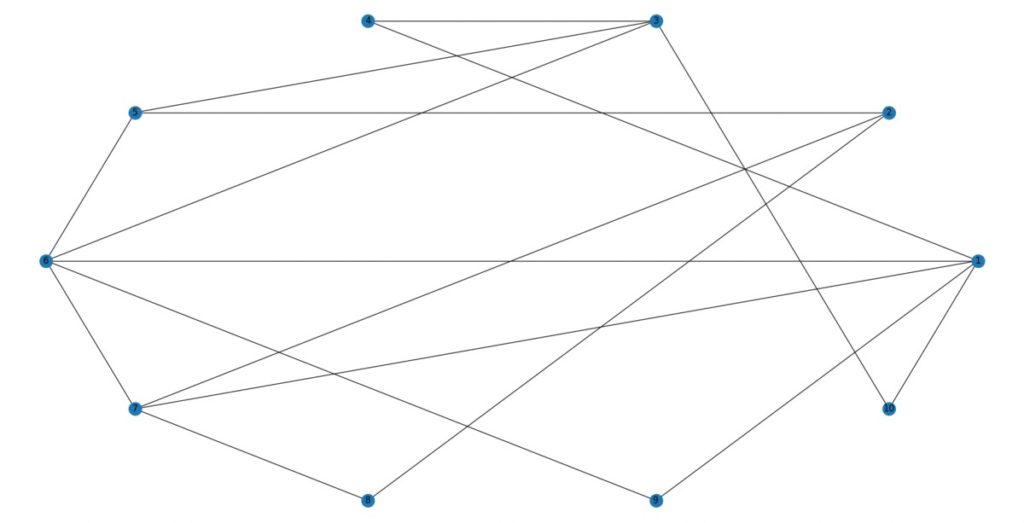

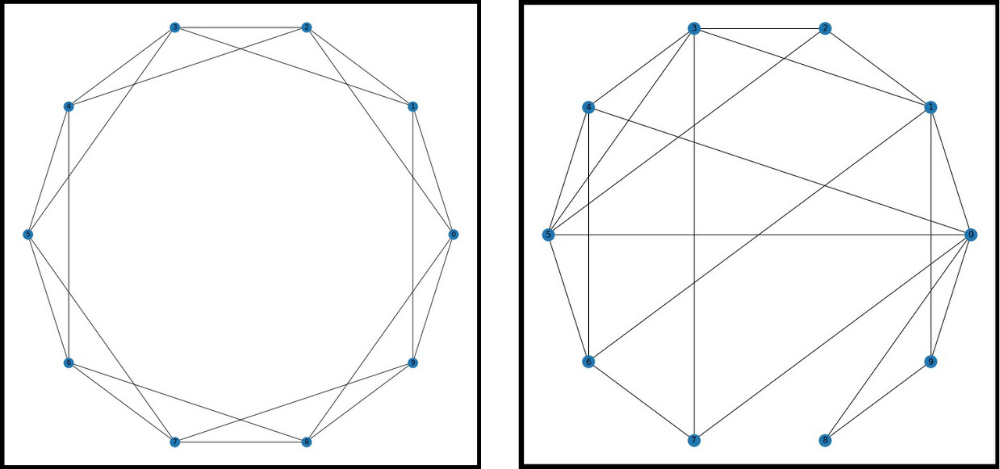

Echo State Networks (ESNs) are a type of recurrent neural network (RNN) which are composed by (a) the input layer, (b) a large, sparsely connected, and randomly initialized "reservoir" that stays fixed during training (and testing) and (c) an output layer with trainable weights. The reservoir is designed for efficient machine learning on sequential data and time-series forecasting. The properties of the reservoir are important for efficient learning and we use different types of network models to obtain optimum performance. Such typical network models are the Erdos-Renyi model (see Fig. 1) and the Watts and Strogatz (also called Small-World) network (see Fig. 2).

Literature

- Albert Laszlo Barabasi, Network Science, Cambridge University Press, Cambridge, 2016.

- Paul Erdos and Rene Renyi, On random graphs I, Publicationes Mathematicae Debrecen 6 290 – 297, 1959, https://doi.org/10.5486/PMD.1959.6.3-4.12

- Duncan J. Watts and Steven H. Strogatz, Collective dynamics of 'small-world' networks, Nature, 393 440 – 442, 1998, https://doi.org/10.1038/30918

- Herbert Jaeger, Mantas Lukosevicius, Dan Popovici and Udo Siewert, Optimization and applications of echo state networks with leaky-integrator neurons, Neural Networks, 20 335-352, 2007, https://doi.org/10.1016/j.neunet.2007.04.016